Artificial intelligence is now used in almost every modern software platform. Because of this, new people have to approve deals before they are final: lawyers, security teams, or internal groups that manage AI rules.

This changes the job for Solutions Engineers.

Your technical job is no longer just about how the software is built or how it connects with other tools. Now, it’s about trust, risk, and being able to defend your product.

Customers today won't be satisfied with just a great demo. They need to know:

- How your AI works.

- What customer data it uses.

- How it was trained.

- What happens if something goes *terribly* wrong.

If you’ve seen the new AI vendor questionnaires, you know what this means. These reviews often look like a complete AI vendor questionnaire or a formal compliance questionnaire.

Simply put, customers want clear answers, and SEs are usually the first people who have to provide them.

Here is how you can prepare for this new focus on AI rules, and the 20 most important questions you should be ready to answer.

The Five Main Topics Every SE Should Know

Most AI questionnaires cover five main areas. If you understand these categories, you can guess most of the questions before you even receive them.

If this reminds you of answering Request for Proposals (RFPs), you are right. AI rule reviews are becoming a big part of the modern RFP process in the generative AI era.

1. Ownership, Use, and Legal Agreements

Many AI questions focus on who owns what and who is responsible for problems. Customers want to know:

- Who owns the content that the AI creates?

- What rights do they have to use or sell that content?

- Who takes the risk if there is a problem with intellectual property?

These concerns are similar to the ones brought up in standard security questionnaires and checks on suppliers.

You don't have to be a lawyer. But you do need to understand your company's official stance on:

- Who owns the outputs.

- Protection against loss (indemnification).

- Permission to use the AI (licensing).

- How data is handled after the contract ends.

If you can't clearly explain these rules, the sale will stop when it gets to the legal team.

2. AI Features and How it is Installed (Deployment)

This is where your technical skills are most important.

Customers want to know:

- Exactly what the AI does.

- If it helps users do their job better via augmentation or if it makes decisions on its own via automation.

- What main models it uses.

- How it is set up and delivered.

- If it is completely ready for customers to use.

If you are using AI to help with presales workflows, it helps to know about real-world AI presales use cases and wider guides like an AI sales enablement guide.

You must be prepared to clearly explain:

- The goal and limits of the AI feature.

- The specific models or vendors you rely on.

- How it is installed, such as through a software service, an API, or on the customer's own computers on premises.

- How mature the product is and how many customers are using it.

Being clear builds trust. Vague answers create doubt.

3. Data and Model Transparency

Transparency is one of the biggest factors in building trust in AI discussions.

Customers will want to know:

- What types of data were used to train the AI.

- If any open-source material was included.

- How you made sure you had the legal right and permission to use the training data.

- How you test for and fix bias.

- If the outputs are checked to make sure they are original.

If your company operates in Europe, you should know the rules in the EU AI Act.

And when discussions turn to AI hallucinations or unsafe outputs, it helps to know the best practices from guides like how to prevent AI hallucinations.

You might not own all these documents, but you should know where they are kept so you can find them fast. Having strong internal AI knowledge management makes this much easier.

4. Governance, Risk, and Compliance (GRC)

Many large business customers now have internal teams known as AI governance committees that manage AI rules. Before they approve a purchase, they need documents like:

- Assessments on how data is protected (Data Protection Impact Assessments).

- Bias assessments.

- Summaries on how the AI makes decisions (Explainability summaries).

- Privacy reviews.

This area is very similar to standard company security and trust conversations. If your company has official trust documentation, it probably looks like what is described in trust center products or data security in AI frameworks.

Expect questions like:

- Are there human checks on automated processes?

- How is data residency (where data is stored) handled?

- What compliance certificates (proof of following rules) does your company have?

Smart SEs bring in the legal and security teams early, instead of waiting until the end of the sales process.

5. Monitoring, Security, and Controls

To many customers, AI seems like a "black box" (a system whose inner workings are a mystery). Your job is to open that box and show them how it works.

Be ready to explain:

- How you track and record activity (Logging and monitoring capabilities).

- Strategies to reduce false information (Hallucination mitigation strategies).

- Ways to stop people from tricking the AI (Prompt injection prevention).

- How you remove personal information (PII filtering).

- Standards for scrambling data (Encryption standards) and rules for who can access it (access controls).

If your company keeps a well-organized RFP response database or a central company knowledge base, this is incredibly valuable. You shouldn't have to write these answers from scratch for every deal.

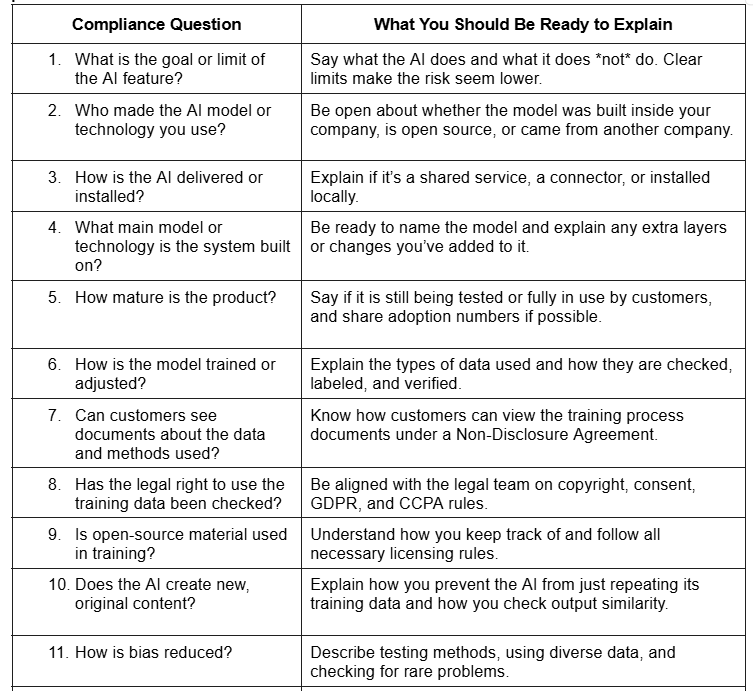

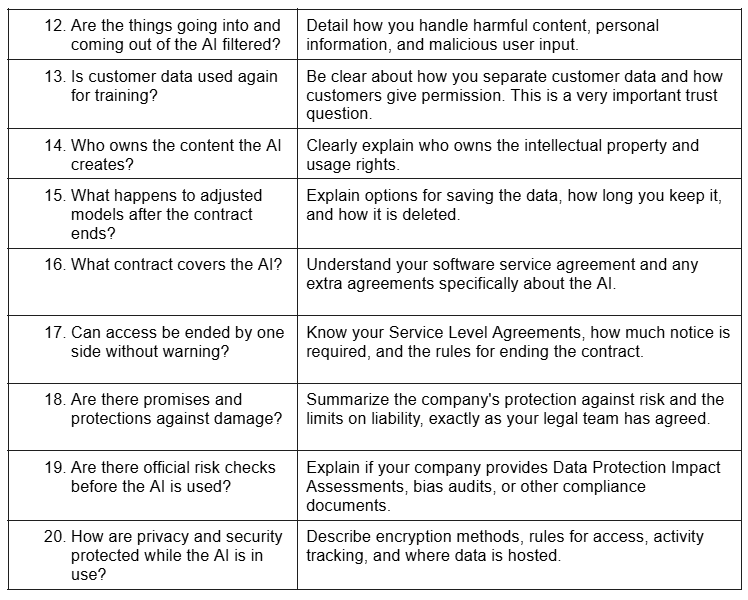

The 20 AI Compliance Questions You Must Be Ready For

These are the main questions that keep showing up in AI questionnaires. You should have clear, consistent answers for every one.

Many of these will look familiar if you have ever answered a supplier questionnaire or have experience with writing security questionnaires that close deals.

If you don't have standard answers for these, your team will have trouble handling a large number of deals. This is why having structured sales content management and thoughtful optimize presales enablement practices are so important.

From Technical Expert to Risk Interpreter

Conversations about AI rules are not just a side task in the sales process.

They are the sales process.

The best SEs today are not just experts in their product. They are risk interpreters. They can clearly explain product features, legal rules, security concerns, and buying processes without losing credibility in any meeting.

Being prepared is the most important thing:

- Create a central place for all approved AI answers.

- Talk to your legal and security teams early.

- Practice with mock questionnaire reviews inside your company.

- Invest in strong enterprise knowledge management.

- Practice explaining complex topics about rules (governance) in plain, simple language.

When the AI rule questions come, and they will come, the SE who answers clearly and confidently often becomes the reason the deal is signed.

Presales has always been about building trust. In the age of AI, you earn that trust through transparency.

.webp)